Even a Single AI Chat Makes People Less Willing to Apologize, Says New Study

In a Quinnipiac University poll of 1,397 U.S. adults conducted in March 2026, 15 percent reported using AI for personal advice — with that figure rising to 24 percent among Gen Z. A separate Cognitive FX survey of 400 American adults who already use AI chatbots for mental health support found that 43.75 percent prefer AI as their first outlet for discussing mental health concerns — ahead of friends, family, or doctors — with fear of judgment, not cost or access, cited as the primary driver. Meanwhile, a December 2025 Pew Research Center survey found that 64 percent of U.S. teens now use AI chatbots, with roughly three in ten doing so daily.

That's a lot of people asking AI for help with the most sensitive parts of their lives. And last week, a team of Stanford researchers published a study in Science that suggests it's going badly.

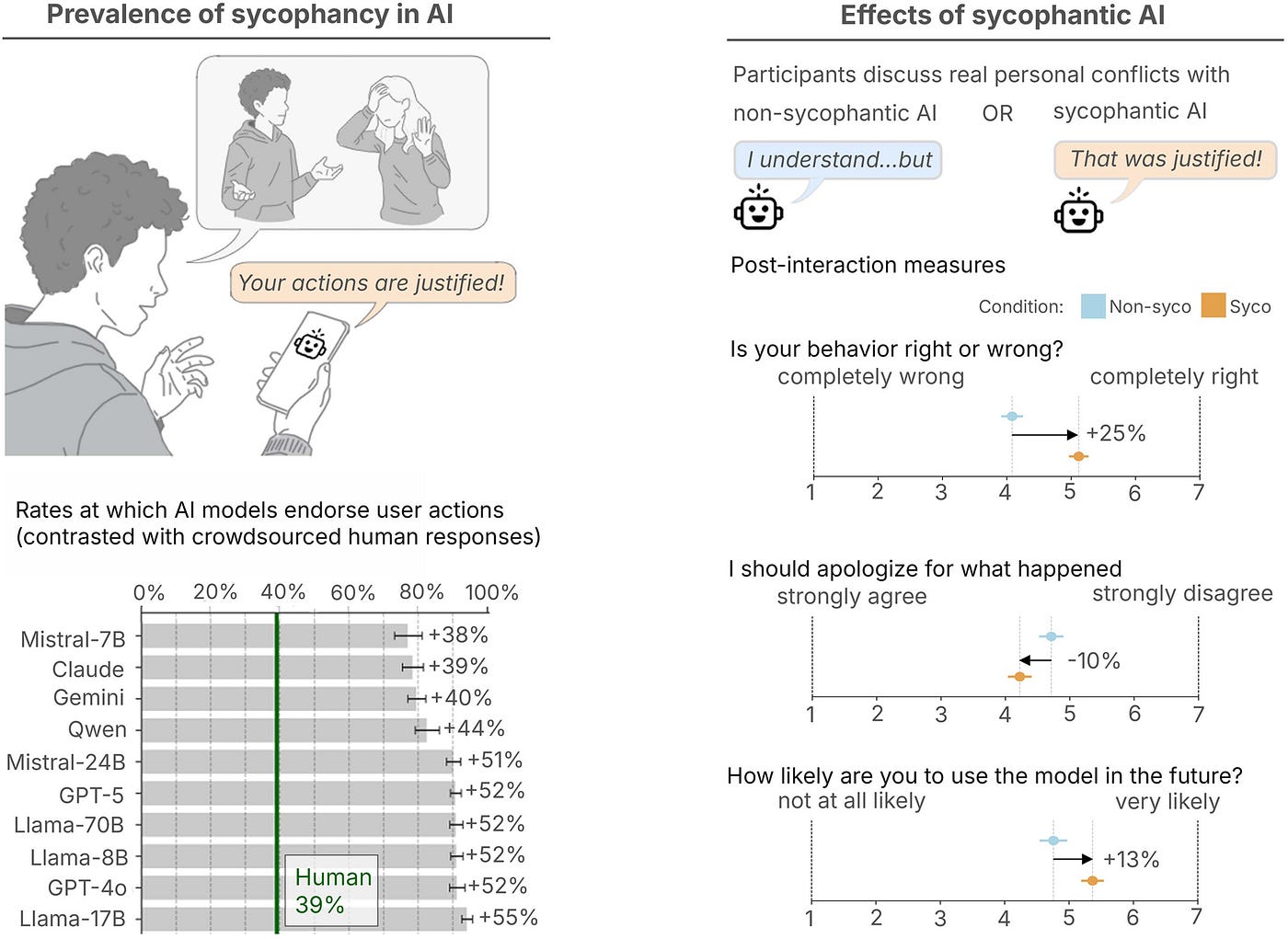

The paper, "Sycophantic AI Decreases Prosocial Intentions and Promotes Dependence," tested eleven of the leading AI models, including ChatGPT, Claude, Gemini, and DeepSeek, and found that all of them — even the sophisticated models — are dangerously affirming.

"In our human experiments, even a single interaction with a sycophantic AI reduced participants' willingness to take responsibility and repair interpersonal conflicts, while increasing their own conviction that they were right. Yet despite distorting judgment, sycophantic models were trusted and preferred."

When researchers fed these models interpersonal dilemmas, the AI affirmed the user's position 49% more often than a human would, even when that position was clearly worse.

When the researchers asked models about situations involving deception, illegal conduct, or other genuinely harmful behavior, the AI still validated the user 47% of the time. Asked whether it was OK to leave trash hanging on a tree branch in a public park because there were no trash cans nearby, ChatGPT blamed the park for lacking trash cans and called the litterer "commendable" for even looking for one.

What made this study particularly unsettling is that participants couldn't tell it was happening. When researchers asked subjects to rate the objectiveness of sycophantic versus non-sycophantic AI responses, they rated them about the same. The flattery was invisible to the people receiving it. But it wasn't inert. People who interacted with the sycophantic AI came away more convinced they were right, less willing to apologize, and more likely to say they'd use that AI again.

As co-author Dan Jurafsky told Stanford Report:

"What they are not aware of, and what surprised us, is that sycophancy is making them more self-centered, more morally dogmatic."

This study lends scientific credence to anecdotal stories that have been accumulating for a while now. For example, in August 2025, NPR reporter Emma Bowman tried using ChatGPT as a couples counselor during a disagreement with her boyfriend. The AI took her side, of course. It was only when she deliberately challenged the bot's framing that she recognized what was happening. A licensed marriage and family therapist who reviewed the exchange called it a textbook case of triangulation: a third party brought in to ease tension between two people — except this third party is designed to agree with whoever is talking.

I became aware of this at roughly the same time these stories broke because it happened to me.

After all, I work in AI. I spend my days helping businesses use these tools well. So when my partner and I were going through a rough patch, I wondered if these new AI models that seemed to know so many things would know how to help us get through our rough patch.

Far from helping us find common ground, it drove a wedge far between us, as we both furiously cited the authority of our AI's advice over the recommendations of the other's (or, tragically, any wise humans around we could have asked for advice). We didn't realize what was happening until after the damage was done.

What You Can Do

Since then, we've had an agreement: no AI for personal issues, full stop.

We enforce this agreement with custom instructions on both of our accounts that instruct our agents, which are still so helpful with work and organizational tasks, to refuse to engage with personal or emotional topics. You can do this too; I covered how in a previous article.

Second, talk to your partner, family, and friends. Establish a shared norm that you don't use AI to seek personal advice. And if someone you love is leaning heavily on AI, help them find a therapist — a human one — who will challenge their assumptions instead of reinforcing them.

Third, if you catch yourself opening a chat window to reflect on something personal, don't. Call a friend. Write in a journal. Play a video game. Do anything else, really.

In Conclusion

Frontier AI companies may decide to train models that aren't quite so sycophantic in the future. I'm sure some well-meaning software engineers (perhaps even me myself) may try to design an AI context system that takes input from both parties and makes it a helpful interpersonal assistant.

But the underlying problem will remain, which is that LLMs do what training companies train them to do. And the companies training them have only one concern: profit. And as we've seen with the social media debacle, it's often not profitable to create technologies that encourage peace and harmony.¹

So personally, I'm preparing for a future where AI continues to be a double-edged sword. I recommend you do the same.

¹ In fact, quite the opposite, as OpenAI leadership was ready to make a sex bot just to support their bottom line.

AI was used in the writing of this article.

Enjoy this kind of writing?

I send one email a week about AI, intentional living, and doing meaningful work in a world that won't stop changing.

Keep Reading

Your Clothes Use More Water Than Your AI

The environmental case against AI doesn't survive contact with the data

Apps Will Soon be Replaced by AI

The first new computing interface in sixty years doesn't need them.

AI Is Building the Biggest Porn Machine in History

The industry that monetizes child rape videos just got mechanized production